The Pocket team brought me in to work on a problem they were seeing in their usage data: signups via their mobile apps were resulting in significantly lower retention rates than signups on desktop browsers. While this might seem counterintuitive given Pocket's emphasis on anytime-anywhere utility, there were still some unknown reasons for that statistic. Pocket wanted to uncover the hidden causes, and come up with a solution to boost their retention rate for mobile signups. So they had planned a series of design sprints in conjunction with the design partners at Google Ventures (one of Pocket's investors) and I joined the Pocket team as a dedicated in-house designer for the sprints.

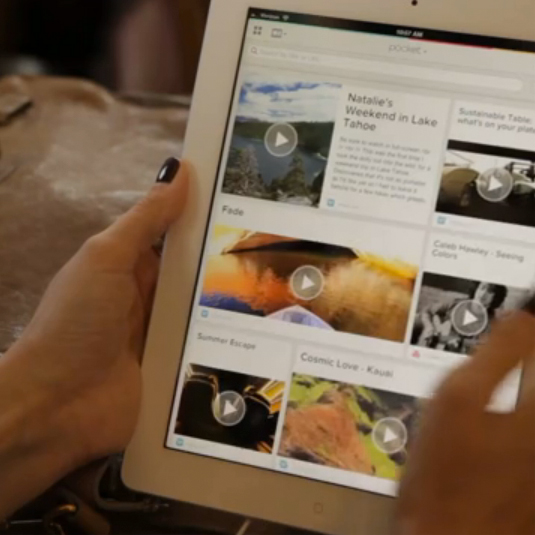

Before we actually brought in the partners from Google Ventures, I wanted to better understand what we were up against. So I set up a quick study of Pocket's mobile apps (iPhone, iPad, and Android) by running a series of tests on Usertesting.com. I've used services like this as a fast, inexpensive, low-commitment method of getting feedback from people on a product or idea. In this case, I just wanted to watch some people engage with the app, hear what they had to say, and hopefully identify some of the issues in the new user experience. Here's a clip from one of the sessions:

Within the first minute, I saw a woman struggling because she was trying out the add via email feature (which is one that many people gravitate towards because it seems the most familiar and straightforward.) The woman thought it wasn't working, but in fact she was simply getting thrown off by the short delay that is inherent in sending an email (as well as the need to refresh her list.) Clearly, this was only a small piece of the larger problem, but these tests helped surface usability issues that were previously unknown.

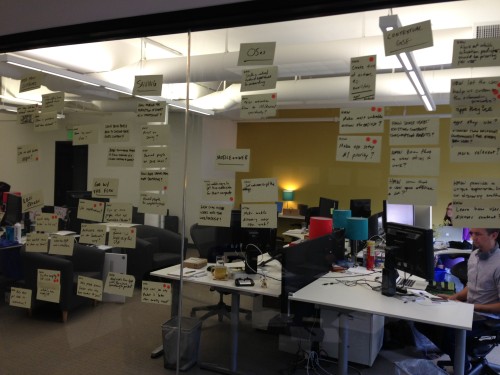

With insights from the tests in mind, we kicked off the sprints by putting up a wall full of "How Might We..." statements on Post-It notes...

... and identified which problems were most critical to address over the course of the sprints (by voting with the dot stickers.) Using one or more of the selected statements, we began sketching solutions. I use the term 'solutions' loosely. We weren't coming up with flows or UIs yet, we were coming up with phrases and doodles that propose an experience. For example, pre-populate Pocket with sample content so people's first contact with the product is not an empty list.

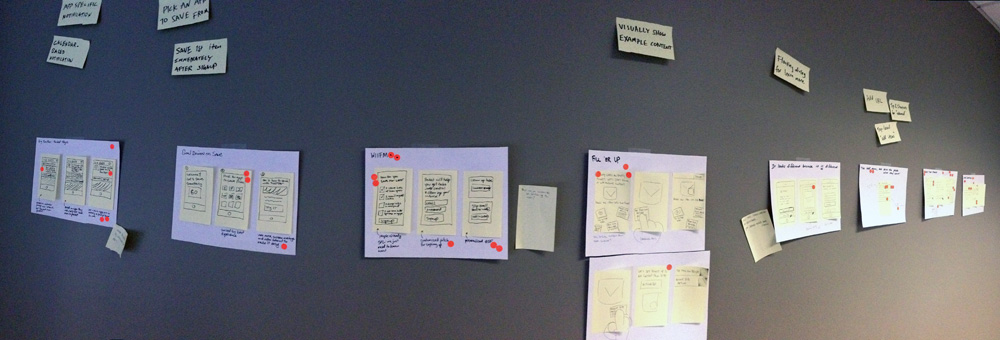

Another round of voting gave us a set of stories to tell with some rapid storyboarding. We're still not thinking about how these solutions might actually work in the product, but we have begun articulating how a series of events or experiences can address the problems we're tackling.

Yet another round of voting brings us to prototyping! Each team member took a story concept and brought it to life in a simple Keynote prototype. Now we're trying to piece together an actual flow, although we're doing so in a vacuum, not in the context of Pocket. After a few rounds of critique to get all the prototypes into a realistic, usable state, we're ready to start testing.

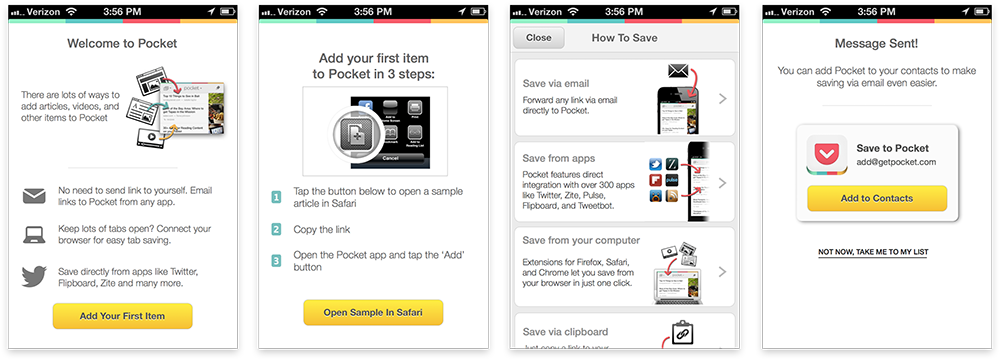

I won't go into the intricacies of running live user testing sessions with multiple prototypes, but we certainly didn't lack for feedback after the first round. From this stage, each consecutive week-long sprint involved refining the prototypes using the insights generated from the prior week's study and testing them again until we had a single prototype that combines the best aspects of the prototypes to date, addresses all the important problems, and encapsulates the experience we want people to have. I built this final prototype in the Pocket style, so we could transition seamlessly from prototype to working product in user testing. Here are a few screens from the prototype (aside from the illustrations, this was all done in Keynote):

Drawing on the findings from the final round of testing with this prototype, we started to design a new onboarding flow and new user experience for the product. There were still some open questions not fully validated by the testing, but testing never ends, so rather than guessing at the best solution, we set up a few A/B tests in the new product.

An article about this specific project mentions the 60% conversion uptick generated by the new designs. It's always exciting to see tangible results from design work.

You can check out Pocket for yourself on the web, iPhone, iPad, and Android.

No comments.